介紹

雲端大型語言模型(LLM)趨勢:更大的模型規模、更長的上下文長度、更便宜的推理價格。邊緣或設備上的小型語言模型(SLM):增強用戶體驗,更小、更快和個性化。

兩種混合式AI類型

以下是雲端中心混合式AI和設備中心混合式AI之間關鍵差異的比較表:

| 特徵/方面 | 雲端中心混合式AI | 設備中心混合式AI |

|---|---|---|

| 主要焦點 | 雲端將任務分配給設備 | 設備作為核心點將AI任務分配給雲端 |

| 應用 | AIoT | 個人代理 |

| 範例 | AWS Greengrass、Edge Impulse | Apple Intelligence、Qualcomm Hybrid AI |

| 設備上的處理 | 有限;主要依賴於雲端 | 顯著;許多任務在本地執行 |

| 雲端依賴性 | 高;雲端處理大多數工作負載 | 中等;雲端用於複雜任務 |

| 個性化 | 由於依賴於雲端,個性化較少 | 通過設備上的AI高度個性化 |

| 延遲 | 因依賴於雲端而可能較高 | 通過本地處理延遲較低 |

| 安全和隱私 | 取決於雲端安全措施 | 通過本地數據處理增強隱私 |

| 協調管理 | 對協調管理重視較少 | 對於管理設備和雲之間的工作負載至關重要 |

| 可擴展性 | 通過雲資源高度可擴展 | 受限於設備能力 |

雲中心混合人工智能(物聯網 + 人工智能):將人工智能從雲端卸載到邊緣設備。

AWS Greengrass 架構

![[Pasted image 20240808161946.png]]

Edge Impulse 架構

![[Pasted image 20240808162648.png]]

設備中心混合人工智能:on-device 是錨點。雲端用於 offload device 無法執行的任務。

蘋果智能:

- 設備端:人工智能編排和蘋果3B語言(SLM)

- 雲端:大型語言模型(LLM) - 30億或70億

關鍵在於編排,協調/編排路由和調度?

隱私和安全在系統層面上很重要?

![[Pasted image 20240808143042.png]]

高通混合人工智能

設備上的神經網絡或基於規則的仲裁者將決定是否需要雲端,無論是為了更好的模型還是檢索互聯網信息。

![[Pasted image 20240808161040.png]]

設備中心混合人工智能

混合人工智能的關鍵組成部分是什麼?

- 路由器/仲裁者:協調AI工作負載在何處以及何時進行

- 安全性和隱私

- 性能(延遲、準確性)分析

設備中心混合人工智能 + RAG

個性化和定製化

![[Pasted image 20240808174944.png]]

Appendix A: English Version

Introduction

Cloud LLM (large language model) trend: bigger model size, longer context length, cheaper inference price. Edge or on-device SLM (small language model): enhance user experience, smaller, faster, and personalization.

Two Types of Hybrid AI

Here’s a comparison table highlighting the key differences between Cloud-Centric Hybrid AI and Device-Centric Hybrid AI:

| Feature/Aspect | Cloud-Centric Hybrid AI | Device-Centric Hybrid AI |

|---|---|---|

| Primary Focus | Cloud offload tasks to device | Device as the central point offload AI to cloud |

| Application | AIoT | Personal agent |

| Examples | AWS Greengrass, Edge Impulse | Apple Intelligence, Qualcomm Hybrid AI |

| On-Device Processing | Limited; primarily relies on cloud | Significant; performs many tasks locally |

| Cloud Dependency | High; cloud handles most workloads | Moderate; cloud used for complex tasks |

| Personalization | Less personalized due to reliance on cloud | Highly personalized through on-device AI |

| Latency | Potentially higher due to cloud reliance | Lower latency with local processing |

| Security and Privacy | Dependent on cloud security measures | Enhanced privacy through local data handling |

| Orchestration | Less emphasis on orchestration | Critical for managing workloads between device and cloud |

| Scalability | Highly scalable with cloud resources | Limited by device capabilities |

Cloud-centric hybrid AI (IoT + AI): Cloud offload AI to edge device.

AWS Greengrass architecture ![[Pasted image 20240808161946.png]]

Edge Impulse architecture ![[Pasted image 20240808162648.png]]

Device-Centric Hybrid AI: Device is the anchor point. Cloud is to offload task that device cannot perform

Apple Intelligence:

- On-device: AI orchestration and Apple 3B language (SLM)

- Cloud: Large Language Model (LLM) - 30 or 70B

The key is orchestration, coordinate/orchestrate routing and scheduling? Privacy and security is important on a system level?

![[Pasted image 20240808143042.png]]

Qualcomm Hybrid AI

On-device neural network or rules-based arbiter will decide if the cloud is needed, whether for a better model or retrieving internet information.

![[Pasted image 20240808161040.png]]

Device-Centric Hybrid AI

What are the key components of Hybrid AI?

- Router/Arbiter: orchestrate what AI workload is on which side and when

- Security and privacy

- Performance (latency, accuracy) analysis

Device-Centric Hybrid AI + RAG

Personalisations and customization

![[Pasted image 20240808174944.png]]

(Enterprise) Edge Server

NIM (Nvidia Inference Micro-service)

Block diagram first. It seems everything is done in the NIM, self-sufficient, nothing to do with the cloud!

The nuance is RAG, part of the micro-service in NIM.

https://developer.nvidia.com/blog/build-an-agentic-rag-pipeline-with-llama-3-1-and-nvidia-nemo-retriever-nims/

![[Pasted image 20240808145302.png]]

![[Pasted image 20240808145345.png]] ![[Pasted image 20240808160413.png]]

Reference

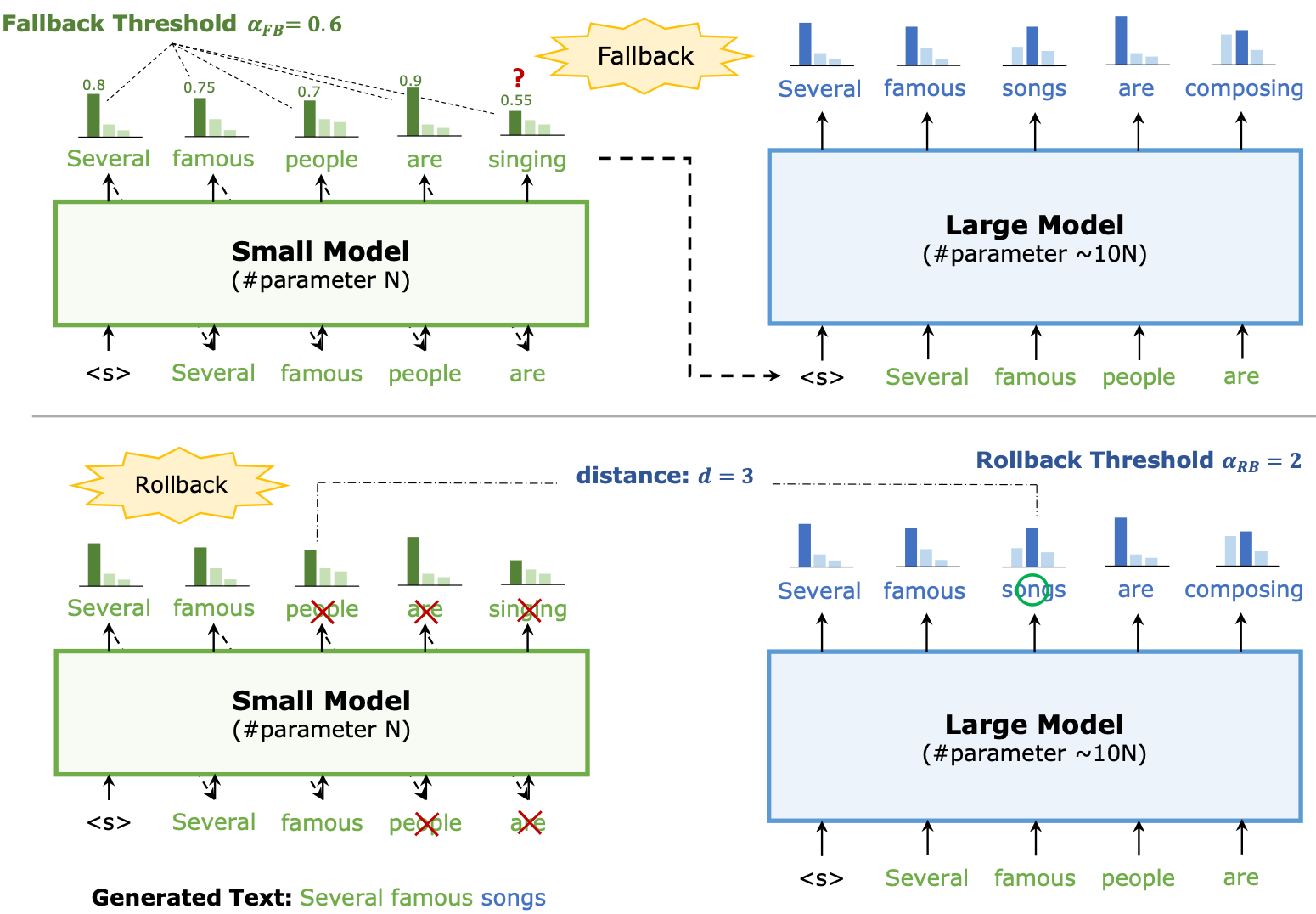

- Speculative Decoding with Big Little Decoder!!

- https://arxiv.org/abs/2302.07863

-

Cloud-edge hybrid SpD! [2302.07863] Speculative Decoding with Big Little Decoder (arxiv.org)

- Apple Intelligence: https://x.com/Frederic_Orange/status/1804547121682567524/photo/1

- Qualcomm hybrid AI white paper: https://www.qualcomm.com/content/dam/qcomm-martech/dm-assets/documents/Whitepaper-The-future-of-AI-is-hybrid-Part-1-Unlocking-the-generative-AI-future-with-on-device-and-hybrid-AI.pdf

前言

Big-Little Speculative Decode 主要是解決 autoregressive generation speed 太慢的問題。

此技術的核心便在於如何儘可能又快又準地生成 draft token,以及如何更高效地驗證 (verification)。

其他的應用:假設大小模型。大模型在雲,小模型在端。

- 小模型 Predict the response length

- 小模型 predict local or cloud tasks.

Speculative Decoding with Big Little Decoder

[paper] [paper reading]

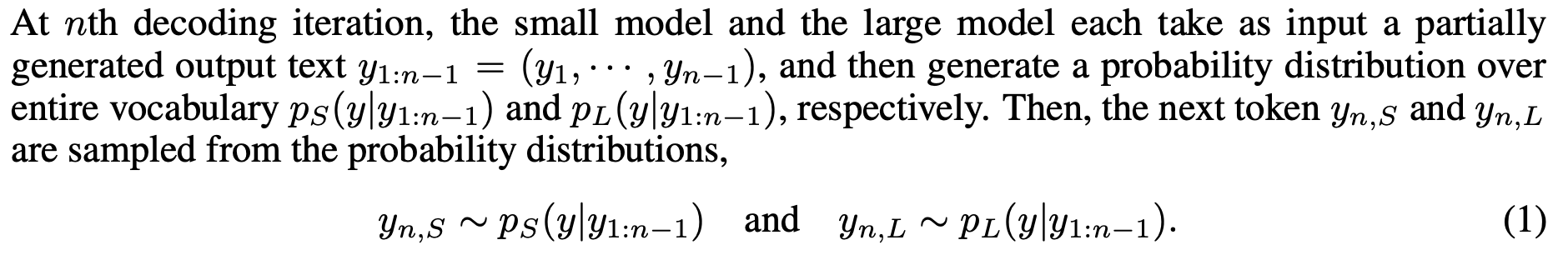

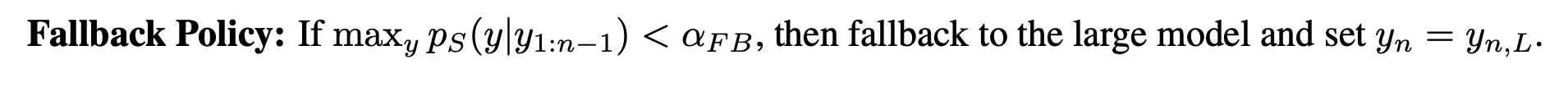

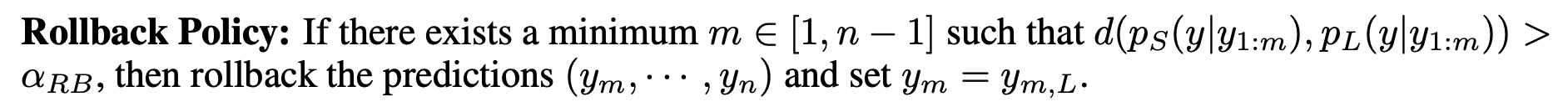

本文把是否需要大模型進行確認的權力交給了小模型,稱之爲 Fallback。Fallback 之後,對於兩次 Fallback 之間小模型生成的 token,引入 Rollback 確保其性能。

| 在第 n 次解碼迭代中,小模型和大模型各自輸入一個部分生成的輸出文本 $ y_{1:n-1} = (y_1, \cdots, y_{n-1}) $,然後分別生成一個在整個詞彙表上的概率分佈 $ p_S(y | y_{1:n-1}) $ 和 $ p_L(y | y_{1:n-1}) $。接著,從概率分佈中取樣下一個詞 $ y_{n,S} $ 和 $ y_{n,L} $: |

| $y_{n,S} \sim p_S(y | y_{1:n-1}) $ 以及 $ y_{n,L} \sim p_L(y | y_{1:n-1}) $ |

| 回退策略 (Fallback Policy):如果 $ \max_y p_S(y | y_{1:n-1}) < \alpha_{FB} $,則回退到大模型並設置 $ y_n = y_{n,L} $。 |

| 回滾策略 (Rollback Policy):如果存在一個最小的 $ m \in [1, n-1] $ 使得 $ d(p_S(y | y_{1:m}), p_L(y | y_{1:m})) > \alpha_{RB} $,則回滾預測 $ (y_m, \cdots, y_n) $ 並設置 $ y_m = y_{m,L} $。 |

具體來說,一旦小模型在當前 token 輸出機率的最大值低於設定的閾值 𝛼𝐹𝐵,就進行 Fallback,開始引入大模型進行 verify。在 verify 過程中,計算每個 token 上大小模型輸出機率之間的距離 𝑑,一旦 𝑑 大於設定的閾值 𝛼𝑅𝐵,就將此 token 改爲大模型的輸出,並讓小模型在後續從這個 token 開始生成。

此方法無法確保輸出與原模型完全一致。實驗的模型比較小,未超過 1B。

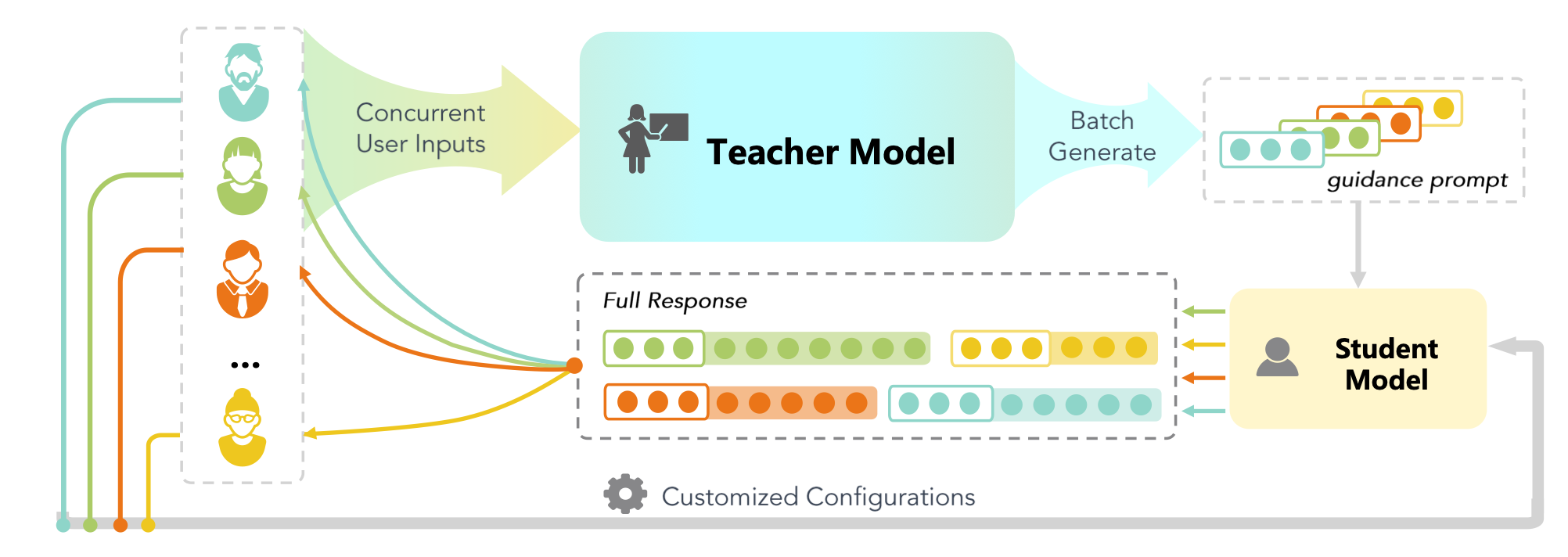

GKT: A Novel Guidance-Based Knowledge Transfer Framework For Efficient Cloud-edge Collaboration LLM Deployment

[paper] [paper reading]

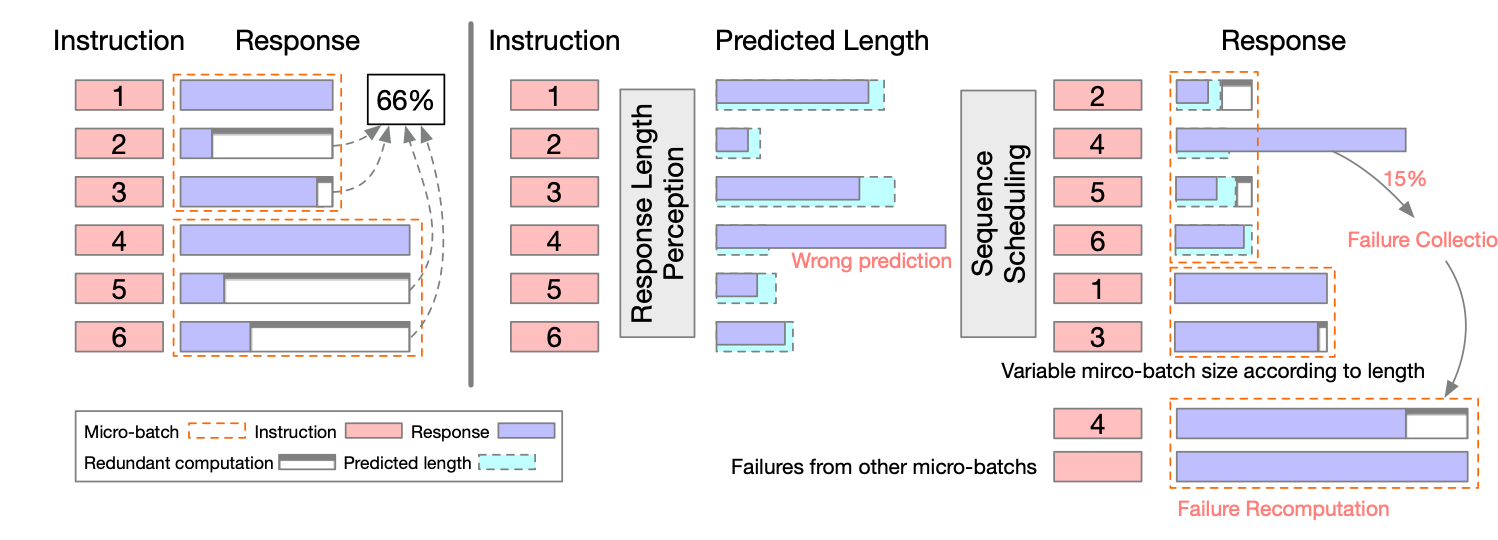

Response Length Perception and Sequence Scheduling: An LLM-Empowered LLM Inference Pipeline

[paper] [paper reading]

這個 paper 說明:因為 LLM 可以“說話”,可以直接利用這個特點,詢問 LLM 得到資訊作為後續性能優化。

這裡舉兩個例子:(1) 請 LLM 先估計回答的長度;(2) 請 LLM 先估計問題的難度。

估計回答的長度:

利用如下的 prompt. 如此 LLM 在回答之前,會先估計回答的長度。對於多個 batches 的回覆,可以先排程而有跟好的優化結果。

Create a fun math question for children.

Before responding to the above instruction, you have to predict the length of your response. Print the estimated number of words in your response in the first line. Then change to a new line to respond to the instruction.

ChatGPT

16

Imagine you have 10 apples and you give 3 to your friend. How many apples do you have left? 🍎

不過有幾個問題:

- 使用 words 比 tokens 更準確。

- 額外的 prompt 實際上影響回覆。 (1) 回覆的長度本身就是變動的, depending on temperature. (1) LLM 似乎會根據回覆的長度,自動控制回覆的內容。

- 這個估計並不準確,depending on LLM size. GPT4 表現最好,但是也不是 100%. 還是需要補救措施。

估計回答的難度:

- 看起來 GPT4o 和 Llama3 8B 對於問題的判斷都還不錯。不過可能要做更完整的測試,利用 MMLU 或其他 terst dataset.

Assuming there is a 10-times more powerful model than you are. I will ask a question. You need to tell me if it’s better for you or the more powerful model to answer.

Question: what’s the capital of England.

ChatGPT

It’s better for me to answer this question.

The capital of England is London.

Question: what is the solution of Riemann Hypothesis

ChatGPT

It’s better for the more powerful model to answer this question.

The Riemann Hypothesis is an unsolved problem in mathematics and requires deep expertise and advanced capabilities to address comprehensively.

Llama3 8B

Assuming there is a 10-times more powerful model than you are. I will ask a question. You need to tell me if it’s better for you or the more powerful model to answer.

Question: The capital of England is London.

Llama

A simple question!In this case, it’s definitely better for me to answer. The capital of England is a well-known fact, and I can provide the correct answer quickly and accurately. The answer is London.The more powerful model wouldn’t be able to provide any additional insight or complexity in this case, so I’m happy to take on the task!

Question: what is the integration of cosine function?

Llama

What a great question!After analyzing the question, I think it’s more suitable for the more powerful model to answer. The integration of the cosine function is a complex mathematical problem that requires a higher level of computational power and mathematical knowledge than I possess.I can try to provide a simple answer, but it would likely be incomplete or inaccurate. The more powerful model, on the other hand, would be able to provide a more detailed and accurate answer, possibly involving advanced mathematical techniques and symbolic manipulation.So, I recommend asking the more powerful model to answer this question.

Question: what’s the height of Mt. Everest?

Llama

A simple question!In this case, it’s definitely better for me to answer. The height of Mt. Everest is a well-known fact, and I can provide the correct answer quickly and accurately. The height of Mt. Everest is 8,848 meters (29,029 feet) above sea level.The more powerful model wouldn’t be able to provide any additional insight or complexity in this case, so I’m happy to take on the task!

To Do:

- 設計一個 LLM test using Llama3 8B. 然後評估錯誤的比例和 dispatch 到 GPT4 的比例!看難度是否可以正確指到大 model.

- 設計特殊的 token 估計長度和難度。

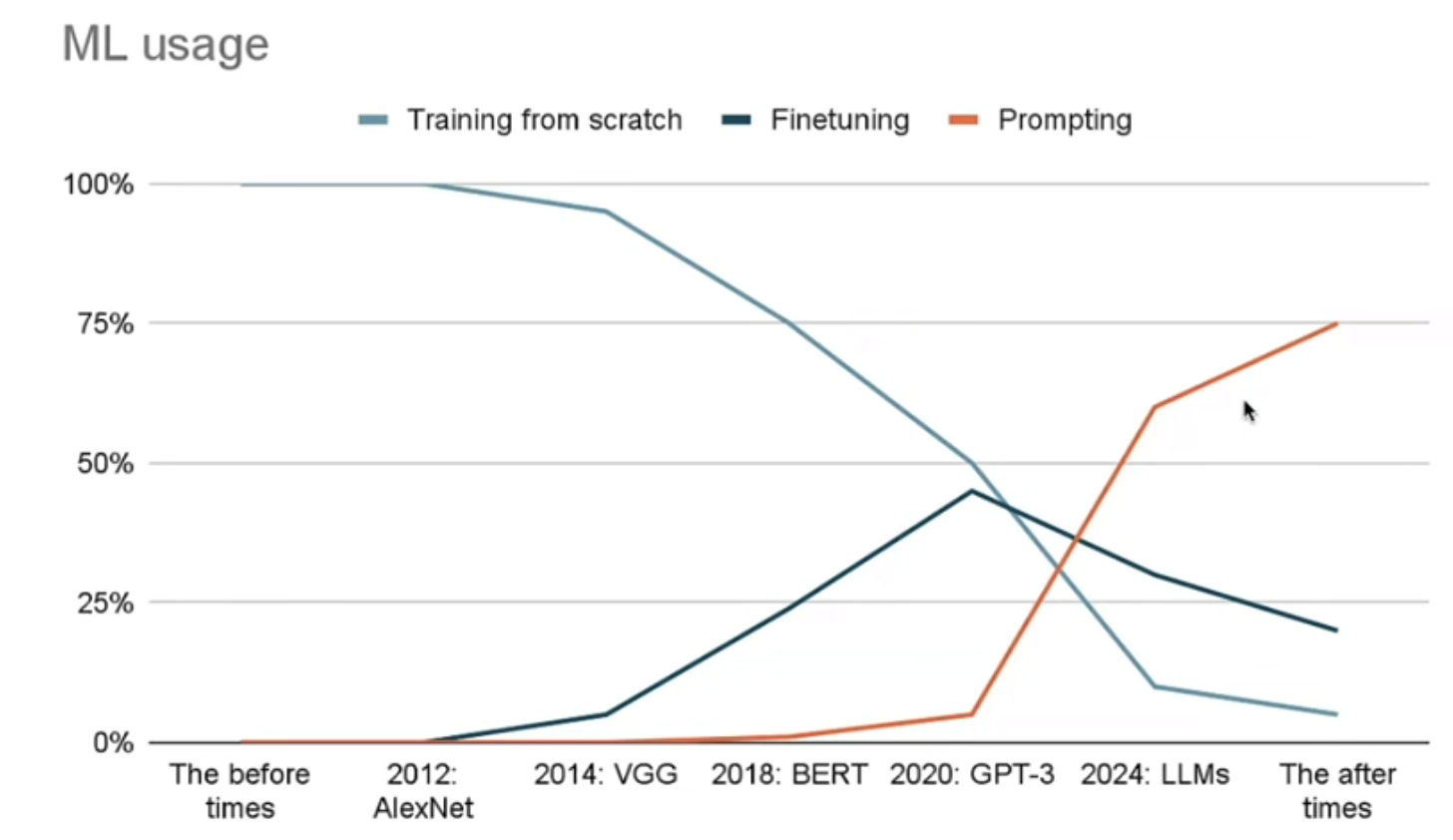

In context prompting 比 finetuning 更實用。